crew resource management

8 results back to index

pages: 543 words: 143,135

Air Crashes and Miracle Landings: 60 Narratives by Christopher Bartlett

Air France Flight 447, air traffic controllers' union, Airbus A320, airport security, Boeing 747, Captain Sullenberger Hudson, Charles Lindbergh, crew resource management, en.wikipedia.org, flag carrier, illegal immigration, it's over 9,000, Maui Hawaii, profit motive, sensible shoes, special drawing rights, Tenerife airport disaster, US Airways Flight 1549, William Langewiesche

As said at the beginning of this narrative, it would be difficult to find a nicer or indeed kinder one. CHAPTER 2 LOSS OF POWER OVER LAND CONSCIENTIOUS CREW FORGET FUEL REMAINING (Portland 1978) Classic Case Heralded CRM (Crew Resource Management) An absurd United Airlines DC-8 accident prompted the introduction of CRM, which initially stood for Cockpit Resource Management, but now allegedly stands for Crew Resource Management to reflect the role of crew working in other parts of the aircraft. [United Airlines Flight 173] On December 28, 1978, a United Airlines McDonnell Douglas DC-8 with flight number 173 was approaching Portland Airport in Oregon, USA.

…

Much of what the captain did and thought about was very commendable, but one has to wonder whether the drip-drip effect of spending so much time thinking about what might happen in the unlikely worst possible scenario did not affect the crewmembers’ grasp of the overall situation. Cockpit Resource Management (CRM) The incident revealed the need for formal policies and programs to ensure aircrew function well as a team, with each having defined complementary duties rather than everyone focusing on a single matter. United Airlines took the experience to heart and became the first to initiate such a program, using some of the techniques already used by business and management consultants. As mentioned at the beginning of this chapter, they called this Cockpit Resource Management (CRM), now changed to Crew Resource Management, to reflect the important role of others, such as cabin crew and even ground staff such as maintenance staff.

…

The official report into the accident mentions that the crop of high corn where the main part of the fuselage ended up upside down hampered rescue efforts by firefighters, and recommended that having agricultural crops in proximity to runways should be reconsidered. Thinking of how the rain sodden ground helped slow Qantas 1 on its 100 mph overrun at Bangkok, one cannot help wondering whether the high corn helped slow the aircraft. Looking at the feat from a piloting perspective, the main lesson does seem to be the importance of Cockpit Resource Management (CRM), though according to Captain Haynes, it was first called Cockpit Leadership Management. Each person performed his allotted task, and ‘ideas were thrown around.’ With hindsight, one idea, which could/should have come to mind, was that the pilots could have transferred fuel from the tanks in the right wing to those on the other side to correct the tendency to bank to the right.

pages: 269 words: 74,955

The Crash Detectives: Investigating the World's Most Mysterious Air Disasters by Christine Negroni

Air France Flight 447, Airbus A320, Boeing 747, Captain Sullenberger Hudson, Charles Lindbergh, Checklist Manifesto, computer age, crew resource management, crowdsourcing, flag carrier, low cost airline, Neil Armstrong, Richard Feynman, South China Sea, Tenerife airport disaster, Thomas Bayes, US Airways Flight 1549

When John Lauber came across the detailed report he called it a “prototype” of an accident in which the crew does not manage the resources available. His cockpit resource management would teach pilots how to do this, in the same way businesses train their managers. “Pilots generally were well trained on aircraft systems and basic flying skills,” he said. But nothing was done to teach them what they needed to know for decision making, communication, and leadership. The Tenerife accident gave Lauber’s work new energy, and in the years to come, cockpit resource management would be changed to “crew resource management,” in recognition that other flight personnel such as mechanics, flight attendants, dispatchers, and air traffic controllers had a role to play in safe flights.

…

If the captain was considered God and everyone else a congregant, you can see how pilots would not/could not speak up even when they saw that something was wrong. John Lauber, working at NASA’s Ames Research Center in California at the time, had already spent several years noting the growing disparity between the reliability of the machine and that of the human flying it. He had visited airlines and spoken about a new concept he called cockpit resource management, or CRM. One of the airlines he visited was KLM Royal Dutch. One of the pilots he met was Captain van Zanten. Lauber remembered, “He was a very impressive guy, a blond, steely-eyed airline pilot. He was a strong-minded personality.” Lauber was pitching CRM as something airlines could use to train pilots to better manage their workplace.

…

Ministry of Transportation, 153 Air Botswana, 201 Airbus A35, 185–86 Airbus A300, 8, 47 Airbus A320, 222, 236, 247 Airbus A321, 99, 130 Airbus A330, 55 Airbus A380, 136, 190, 211, 239–40, 243, 246–47, 253–55 Air Canada Flight 143, 223–26, 234–35, 240–43, 249–50, 255–56 Aircraft Communications Addressing and Reporting System (ACARS), 20, 41, 45–46, 55, 57 air data inertial reference unit (ADIRU), 235–36 Air France Flight 447, 53, 55–58 Air Line Pilots Association (ALPA), 110, 171, 176, 216 Air New Zealand Flight 901, x, 117–31 Antarctic Experience flights, 118, 125, 128–29 Air Registration Board, United Kingdom, 150, 154 air traffic control (ATC) and accident investigation process, 82 and Air New Zealand Flight 901 crash, 120, 127 and Albertina crash, 89 and ANA Flight 692, 168 and Arrow Air crash, 108 and cockpit resource management, 220 and Comet crashes, 155 communications with hypoxic crew, 11, 13, 32–33 and complexity of air safety system, 260 and flight simulations, 252 and Helios Flight 522, 41 and human factors, 198–99, 201 and MH-370, 20–22, 62 and Northwest Flight 188, 227 and Qantas engine loss incident, 247 and radio navigation, 51 and Tenerife runway collision, 214–16 and United Flight 553, 84–85 Airline Training Center Arizona (ATCA), 198 Aizawa, Takeo, 193 Albertina (DC-6), 87, 89, 92–96 All Nippon Airways (ANA), 166–69, 181–82, 187, 192 Allen, A.

pages: 175 words: 54,028

Fly by Wire: The Geese, the Glide, the Miracle on the Hudson by William Langewiesche

Air France Flight 447, Airbus A320, airline deregulation, Bernard Ziegler, Boeing 747, Captain Sullenberger Hudson, Charles Lindbergh, crew resource management, gentrification, New Journalism, two and twenty, US Airways Flight 1549, William Langewiesche

When asked about teamwork in the cockpit during the glide, he said there was little need for it, and little was involved: he had started into the checklist to restart the engines, and Sullenberger had done the flying. The division was plain and simple, and pretty obvious at the moment. You could arrive afterward and call it an exercise in Crew Resource Management—sorry, I mean CRM—if you insisted on fixing things up with formal language. CRM is indeed a useful term. Until recently it stood for Cockpit Resource Management and pertained only to pilots, until someone realized that the C could stand for Crew, allowing flight attendants into the program. Entire industries are built on this sort of progress. But frankly the glide had been very short, with no space for elaboration.

…

Sullenberger said, “Thank you.” The engine manufacturer had no questions. US Airways had no questions. The pilots’ union representative wanted to get back to crew resource management. There wasn’t much to say. In fact, if you wanted to pick one accident in which elaborations on teamwork don’t need to be made, this would be a good one to choose. It was I’ll fly the airplane, you try to restart the engines. But crew resource management has become a central dogma, the sine qua non of airline flying, and because Sullenberger’s landing had been successful, it seemed necessary to mix it in now. Sullenberger was willing to try.

…

The captain was fifty-seven years old, nearing the end of a long and successful career. He had an excellent reputation. The copilot was thirty-nine. His reputation was equally strong. In the Air Force he had once been named Instructor of the Year. Both pilots lived in Florida. They were good family men. They were athletic. They had been schooled repeatedly in Cockpit Resource Management. If asked, they would have sworn to the need for standardization, for regulation, and for what passes as professionalism in the trade. • It was a black night, though some lights may have been in sight. The weather at the airport was mostly clear, with a few clouds scattered about. Cali lies in a long, narrow valley oriented north–south, about 3,200 feet above sea level, and with mountains rising to 14,000 feet on each side.

pages: 394 words: 107,778

The Splendid Things We Planned: A Family Portrait by Blake Bailey

airport security, Apollo 11, Apollo 13, Berlin Wall, Charles Lindbergh, COVID-19, crew resource management, glass ceiling, human-factors engineering, index card, Neil Armstrong, orbital mechanics / astrodynamics, overview effect, pre–internet, Ronald Reagan, Stephen Hawking

The aircraft commander had to be comfortable with many leadership styles and know which ones were appropriate for a time-critical situation, using only his or her experience and judgment. I quickly learned to trust my enlisted crew, as many of them had been doing their jobs for more than a decade. They in turn respected aircraft commanders who listened to them. One of my most valuable leadership lessons was “cockpit resource management” or “crew resource management” (CRM). Developed by NASA and the National Transportation Safety Board in the late 1970s, CRM provides a structured way for flight crews to work through problem situations through better leadership, communication, and decision making. The basic tenets are: know your job and do your job; be aware of what the other crew members are doing; and communicate clearly and check for understanding.

…

See daycare center; nanny Chinn, Glenn, 79, 81 Chlapowski, Sue, 38 Clark, Laurel, 244, 254 Clark AFB, 76 claustrophobia, 55–56, 120 Cleave, Mary, 125, 128 Clervoy, Jean-François (Billy Bob), 181–183, 200, 203 Clinton, Hillary, 206–207, 218 Clinton, Bill, 167, 206–208 closeout crews, 141–144, 157, 161, 189, 221, 268 clutter, importance of managing in space 168 Coats, Mike, 125, 128 Cochran, Jackie, 13, 22, 285 cockpit resource management (or crew resource management, CRM), 84–85 Cocoa Beach, 203, 267 Coke Experiment, 169–171 Cold War, 12 Coleman, Cady, 113, 208, 210, 218–220, 227, 231, 236 community college, 21–22 Collins, Edward (Eddie, brother), 7, 14, 16–17 Collins, James Edward (father), 7–12, 19, 23, 158, 177, 244, 280 Collins, James (Jim/Jay brother), 7, 14, 18, 129, 280 Collins, Margaret (Margy, sister), 7, 16, 18, 129 Collins, Marie Reidy (grandmother), 167 Collins, Michael, 218, 285 Collins’ Restaurant and Pub (the Place), 9 Colorado Springs, Colorado, 88 Colosseum, 111–112 Columbia flow liner cracks, 241 fuel leaks, 132 landing, 242 maintenance, 214 modifications to carry Chandra, 210 STS-32R landing, 116 STS-50, 141–142 STS-55, 143–144 tribute to, 274 wreckage, 248 See also STS-93, STS-107 Columbia Accident Investigation Board, 249 Columbus, Ohio, 25 combat, 79–80 exclusion policy, 73–74, 81, 87, 113, 158 pay, 81 Comet Hale-Bopp, 197 communications skills, 59, 84, 90, 96, 239, 250–251 competition, 70, 103, 117, 250 complacency, 68 Compton Gamma Ray Observatory, 205 computerization of spacecraft, 145 Congress, 177 Conrad, Pete, 135 control and performance concept, 46, 59, 153 risk, 244 self, 9, 19, 26, 45, 110, 180, 229, 237 vehicle, 1, 5, 41, 61, 67, 105, 107, 151, 174, 194, 213, 232, 261 Cooney, Wilson, 47 Cooper-Harper scale, 104–105 copilot training course, 75 Coral Run, 76 Cornell University, 23 Corning Community College, 21 cosmonauts, 149, 154–155, 166–167, 182, 185, 194–195, 274 “The Cosmonaut’s Song,” 187 Country Cork, Ireland, 8 Courvoisier Cognac, 192 Covey, Dick, 110–111, 125–126 CRATER, 253 creativity, 182, 254–255 Crew Equipment Interface Test (CEIT), 140, 156 crew notebook, 196 Crew Transport Vehicle, 175, 203, 277 Crossfield, Scott, 92, 103 culture change, 77–78, 249–255 curiosity, 13, 40, 128, 285 Currie, Nancy, 132 Cyprus, 77 danger, 188, 212, 221, 223–227 on space shuttle, 201 data collecting, 106, 123, 152, 183, 203, 279 supporting launch decision, 268 transforming into information, 104 unreliable, 166, 194, 220 dating, 39, 85–86 Davis, AJ, 29–30 Davis, Ernie, 7, 285 Davis, Jan, 142 daycare center, 187 Dayton, Ohio, 112 decision-making, 40, 109, 135 dehydration, 53, 163, 279 delays, 150–151 Delta Air Lines, 92, 240, 279 departure from controlled flight, 107–109 depressurization, 39 desk job, 85, 238 Diego Garcia, 76 differential calculus, 89 digital control unit (DCU), 224–225 disappointment, 16, 147–148, 151, 231 discipline, 11, 43–44, 50, 65, 90, 108, 181, 187, 255, 285 Discovery flow liner cracks, 241 rudder/speed break actuators (RSBs), 261 STS-41D pad fire, 143–144 wiring repairs, 234 See also STS-63, STS-114 discrimination, 179 Dittemore, Ron, 239 dive flight, 109 dogfighting, 64 Donaldson, Sam, 207 Double Diego mission, 76, 86 Dover Air Force Base, 30, 79 dream, 92, 106, 118, 127, 129, 132, 196, 206–208, 274, 286 achieving, 1, 95, 127, 129, 153, 158, 206, 281–282 importance of, 208 See also goals dream sheet, 73–74 Eadie, Bob, 70 Earhart, Amelia, 13 Earth curvature, 6 observation, 137, 281 reflection, 198–199 return to reaction, 175–176 from space, 198 Edison, Thomas, 285 Edwards AFB, 95–96, 98–99, 275, 277 parachute room, 116 Eglin AFB, 113 ego.

…

As commander, I spent many hours negotiating how Michel could access the information he needed to fulfill his role on the mission. But, despite my efforts, we ended up being the only space shuttle crew to fly an IUS without training at the Boeing plant. New Commander, New Pilot My pilot, Jeff Ashby, and I were both new in our respective roles. He and I practiced together closely to perfect our cockpit resource management techniques as well as all of the technical challenges of flying the space shuttle and operating its systems. The Mission Specialist 2 on the shuttle’s crew serves as the flight engineer and has important roles during ascent and entry. Steve flew as MS 2 on all four of his previous missions.

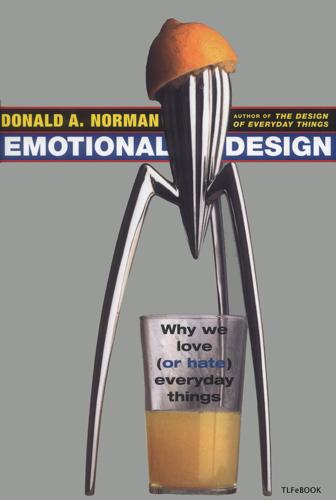

Emotional design: why we love (or hate) everyday things by Donald A. Norman

A Pattern Language, crew resource management, Dean Kamen, industrial robot, job automation, language acquisition, Neal Stephenson, Rodney Brooks, Vernor Vinge, Yogi Berra

The commercial aviation community has done an excellent job of fighting this tendency with its program of "Crew Resource Management." All modern commercial aircraft have two pilots. One, the more senior, is the captain, who sits in the left-hand seat, while the other is the first-officer, who sits in the right-hand seat. Both are qualified pilots, however, and it is common for them to take turns piloting the aircraft. As a result, they are referred to by the terms "pilot flying" and "pilot not flying." A major component of crew resource management is that the pilot who is not flying be an active critic, continually checking and questioning the actions taken by the pilot who is flying.

…

Afire upon the deep. New York: Tor. Weizenbaum, J. (1976). Computer power and human reason: Fromjudgment to calculation. San Francisco: W. H. Freeman. Whyte, W. H. (1988). City: Rediscovering the center (1st ed.). New York: Doubleday. Wiener, E. L., Kanki, B. G., & Helmreich, R. L. (1993). Cockpit resource management. San Diego: Academic Press. Wolf, M. J. P. (2001). The medium of the video game (1st ed.). Austin: University of Texas Press. See "Genre and the Video Game" at http://www.robinlionheart. com/gamedev/genres.xhtml. TLFeBOOK Index Accidents, 28, 78, 203, 204, 205, 229 Advertising, 41, 42, 43, 45, 54, 78, 79, 87, 91, 92, 104, 105, 152 Aesthetics, 4, 8, 18, 19, 87, 102, 103, 104, 105, 120,225 as culturally dependent, 18 and usability, 19 See also Beauty Aesthetics of the Japanese Lunchbox, The (Ekuan), 101, 102(fig.), 103 Affect, 11, 12, 13, 18, 20, 24, 25, 26, 27, 28, 29, 32, 36, 37, 38, 95, 103, 119, 124, 125, 126, 135, 138, 140, 154, 166, 167, 169, 176,179,182,184,185,203,211 behavioral level of, 124, 125 negative, 20, 22, 24, 25, 26, 27, 28, 29, 32, 36,95,119,138,154,179.

…

Email received in response to my query on the CHI discussion group. May 2002. (CHI is the Computer-Human Interaction society.) 143 "It's human nature to trust our fellow man" (Mitnick & Simon, 2002, p. 32.) 144 "social psychologists Bibb Latane and John Darley" and "Bystander apathy" (Latane & Darley, 1970) 145 "Crew Resource Management" (Wiener, Kanki, & Helmreich, 1993) 146 "As I was writing this book" (Hennessy, Patterson, Lin, & National Research Council Committee on the Role of Information Technology in Responding to Terrorism, 2003) 148 "Everywhere is nowhere." Thanks to John King, Dean of the School of Information at University of Michigan for the quotation from Seneca. 150 "Instant messenger."

pages: 309 words: 100,573

Cockpit Confidential: Everything You Need to Know About Air Travel: Questions, Answers, and Reflections by Patrick Smith

Airbus A320, airline deregulation, airport security, Atul Gawande, Boeing 747, call centre, Captain Sullenberger Hudson, collective bargaining, crew resource management, D. B. Cooper, high-speed rail, inflight wifi, Korean Air Lines Flight 007, legacy carrier, low cost airline, Maui Hawaii, Mercator projection, military-industrial complex, Neil Armstrong, New Urbanism, pattern recognition, race to the bottom, Skype, Tenerife airport disaster, US Airways Flight 1549, zero-sum game

Requirements for an ATP include a minimum of 1,500 hours of flight time (broken down over various categories) and satisfactory completion of written and in-flight examinations. Additionally the law will redefine the ATP certificate itself, emphasizing the operational environments of commercial air carriers and requiring specialized training in things like cockpit resource management (CRM), crew coordination, and so on. These changes will make it easier to weed out pilots who lack the acumen for airline operations. For those who progress, it will allow an easier transition from general aviation into the high-demand training environment at a regional. It will lower their training costs and, ultimately, make for safer cockpits.

pages: 409 words: 105,551

Team of Teams: New Rules of Engagement for a Complex World by General Stanley McChrystal, Tantum Collins, David Silverman, Chris Fussell

Airbus A320, Albert Einstein, Apollo 11, Atul Gawande, autonomous vehicles, bank run, barriers to entry, Black Swan, Boeing 747, butterfly effect, call centre, Captain Sullenberger Hudson, Chelsea Manning, clockwork universe, crew resource management, crowdsourcing, driverless car, Edward Snowden, Flash crash, Frederick Winslow Taylor, global supply chain, Henri Poincaré, high batting average, Ida Tarbell, information security, interchangeable parts, invisible hand, Isaac Newton, Jane Jacobs, job automation, job satisfaction, John Nash: game theory, knowledge economy, Mark Zuckerberg, Mohammed Bouazizi, Nate Silver, Neil Armstrong, Pierre-Simon Laplace, pneumatic tube, radical decentralization, RAND corporation, scientific management, self-driving car, Silicon Valley, Silicon Valley startup, Skype, Steve Jobs, supply-chain management, systems thinking, The Wealth of Nations by Adam Smith, urban sprawl, US Airways Flight 1549, vertical integration, WikiLeaks, zero-sum game

Helmreich, “On Error Management: Lessons from Aviation,” British Medical Journal, 320, no. 7237 (2000): 782. CRM-trained . . . http://www.apa.org/research/action/crew.aspx. juniors to speak more assertively . . . Helmreich et al., “Evolution of Crew Resource Management Training,” 20. “charm school” . . . Helmreich et al., “Evolution of Crew Resource Management Training,” 21. In 1989, another United . . . National Transportation Safety Board, Aircraft Accident Report: United Airlines Flight 232 McDonnell Douglas DC-10-10 Sioux Gateway Airport; Sioux City, Iowa, July 17, 1989, NTSB Number AAR-90-06 (Washington, D.C., 1991), 75.

…

., 1991), 75. no safety procedure . . . http://clear-prop.org/aviation/haynes.html. In 1981, United.Robert L. Helmreich, Ashleigh C. Merritt, and John A. Wilhelm, “The Evolution of Crew Resource Management Training in Commercial Aviation,” International Journal of Aviation Psychology 9, no. 1 (1999): 20. 185 of the 296 people . . . American Psychological Association, Making Air Travel Safer Through Crew Resource Management, February 2014, http://www.apa.org/research/action/crew.aspx. thirty-one communications per minute . . . American Psychological Association, Making Air Travel Safer. “If we had not let” . . . http://clear-prop.org/aviation/haynes.html.

…

National Transportation Safety Board, AAR 1989, 76. more than 90 percent . . . Barbara G. Kanki, Robert L. Helmreich, and José M. Anca, “Why CRM? Empirical and Theoretical Bases of Human Factors Training,” in Crew Resource Management, 2nd ed. (Amsterdam: Academic Press/Elsevier, 2010), 35. See original study: R. L. Helmreich and J. A. Wilhelm, “Outcomes of Crew Resource Management Training,” International Journal of Aviation Psychology 1 (1991): 287–300. 2012 and 2013 had the fewest deaths and fatalities . . . 2012 had fewer crashes, but 2013 had the fewest casualties, with 265 fatalities (compared with the past ten-year average of 720 fatalities).

pages: 410 words: 114,005

Black Box Thinking: Why Most People Never Learn From Their Mistakes--But Some Do by Matthew Syed

Abraham Wald, Airbus A320, Alfred Russel Wallace, Arthur Eddington, Atul Gawande, Black Swan, Boeing 747, British Empire, call centre, Captain Sullenberger Hudson, Checklist Manifesto, cognitive bias, cognitive dissonance, conceptual framework, corporate governance, creative destruction, credit crunch, crew resource management, deliberate practice, double helix, epigenetics, fail fast, fear of failure, flying shuttle, fundamental attribution error, Great Leap Forward, Gregor Mendel, Henri Poincaré, hindsight bias, Isaac Newton, iterative process, James Dyson, James Hargreaves, James Watt: steam engine, Johannes Kepler, Joseph Schumpeter, Kickstarter, Lean Startup, luminiferous ether, mandatory minimum, meta-analysis, minimum viable product, publication bias, quantitative easing, randomized controlled trial, selection bias, seminal paper, Shai Danziger, Silicon Valley, six sigma, spinning jenny, Steve Jobs, the scientific method, Thomas Kuhn: the structure of scientific revolutions, too big to fail, Toyota Production System, US Airways Flight 1549, Wall-E, Yom Kippur War

On the thirtieth page, in the dry language familiar in such reports, it offered the following recommendation: “Issue an operations bulletin to all air carrier operations inspectors directing them to urge their assigned operators to insure that their flight crews are indoctrinated in principles of flightdeck resource management, with particular emphasis on the merits of participative management for captains and assertiveness training for other cockpit crewmembers.” Within weeks, NASA had convened a conference to explore the benefit of a new kind of training: Crew Resource Management. The primary focus was on communication. First officers were taught assertiveness procedures. The mnemonic that has been used to improve the assertiveness of junior members of the crew in aviation is called P.A.C.E. (Probe, Alert, Challenge, Emergency).* Captains, who for years had been regarded as big chiefs, were taught to listen, acknowledge instructions, and clarify ambiguity.

…

To the public it was an episode of sublime individualism; one man’s skill and calmness under pressure saving more than a hundred lives. But aviation experts took a different view. They glimpsed a bigger picture. They cited not just Sullenberger’s individual brilliance but also the system in which he operates. Some made reference to Crew Resource Management. The division of responsibilities between Sullenberger and Skiles occurred seamlessly. Seconds after the bird strike, Sullenberger took control of the aircraft while Skiles checked the quick-reference handbook. Channels of communication were open until the very last seconds of the flight.

…

This was a fascinating discussion, which largely took place away from the watching public. But even this debate obscured the deepest truth of all. Checklists originally emerged from a series of crashes in the 1930s. Ergonomic cockpit design was born out of the disastrous series of accidents involving B-17s. Crew Resource Management emerged from the wreckage of United Airlines 173. This is the paradox of success: it is built upon failure. It is also instructive to examine the different public responses to McBroom and Sullenberger. McBroom, we should remember, was a brilliant pilot. His capacity to keep his nerve as the DC8 careered down, flying between trees, avoiding an apartment block, finding the minimum impact force for a 90-ton aircraft hitting solid ground, probably saved the lives of a hundred people.

pages: 362 words: 97,288

Ghost Road: Beyond the Driverless Car by Anthony M. Townsend

A Pattern Language, active measures, AI winter, algorithmic trading, Alvin Toffler, Amazon Robotics, asset-backed security, augmented reality, autonomous vehicles, backpropagation, big-box store, bike sharing, Blitzscaling, Boston Dynamics, business process, Captain Sullenberger Hudson, car-free, carbon footprint, carbon tax, circular economy, company town, computer vision, conceptual framework, congestion charging, congestion pricing, connected car, creative destruction, crew resource management, crowdsourcing, DARPA: Urban Challenge, data is the new oil, Dean Kamen, deep learning, deepfake, deindustrialization, delayed gratification, deliberate practice, dematerialisation, deskilling, Didi Chuxing, drive until you qualify, driverless car, drop ship, Edward Glaeser, Elaine Herzberg, Elon Musk, en.wikipedia.org, extreme commuting, financial engineering, financial innovation, Flash crash, food desert, Ford Model T, fulfillment center, Future Shock, General Motors Futurama, gig economy, Google bus, Greyball, haute couture, helicopter parent, independent contractor, inventory management, invisible hand, Jane Jacobs, Jeff Bezos, Jevons paradox, jitney, job automation, John Markoff, John von Neumann, Joseph Schumpeter, Kickstarter, Kiva Systems, Lewis Mumford, loss aversion, Lyft, Masayoshi Son, megacity, microapartment, minimum viable product, mortgage debt, New Urbanism, Nick Bostrom, North Sea oil, Ocado, openstreetmap, pattern recognition, Peter Calthorpe, random walk, Ray Kurzweil, Ray Oldenburg, rent-seeking, ride hailing / ride sharing, Rodney Brooks, self-driving car, sharing economy, Shoshana Zuboff, Sidewalk Labs, Silicon Valley, Silicon Valley startup, Skype, smart cities, Smart Cities: Big Data, Civic Hackers, and the Quest for a New Utopia, SoftBank, software as a service, sovereign wealth fund, Stephen Hawking, Steve Jobs, surveillance capitalism, technological singularity, TED Talk, Tesla Model S, The Coming Technological Singularity, The Death and Life of Great American Cities, The future is already here, The Future of Employment, The Great Good Place, too big to fail, traffic fines, transit-oriented development, Travis Kalanick, Uber and Lyft, uber lyft, urban planning, urban sprawl, US Airways Flight 1549, Vernor Vinge, vertical integration, Vision Fund, warehouse automation, warehouse robotics

Keep ignoring it, and after 15 seconds it disengages. If Tesla is an absentminded babysitter, Super Cruise is a helicopter parent. I don’t envy the designers of Autopilot and Super Cruise. Making partial-self-driving technology both roadworthy and appealing to car buyers isn’t easy. In aviation, an entire science of “crew resource management” emerged in the 1980s to reduce the risks of heavy cockpit automation. Comparatively speaking, automakers are just getting started. Yet until full vehicle automation can be achieved—and computers relieve us entirely of all driving tasks—our eyes, ears, and minds must be managed as carefully as torque, throttle, and traction.

…

Hawkins, “Tesla’s Autopilot Was Engaged When Model 3 Crashed into Truck, Report States,” The Verge, May 16, 2019, https://www.theverge.com/2019/5/16/18627766/tesla-autopilot-fatal-crash-delray-florida-ntsb-model-3. 28Brown spent a mere 25 seconds of the final 37 minutes of the trip: National Transportation Safety Board, Collision between a Car Operating with Automated Vehicle Control Systems and a Tractor-Semitrailer Truck near Williston, Florida, Accident Report NTSB/HAR-17/02, PB2017-102600, October 12, 2017, 15. 28A single light touch: National Transportation Safety Board, Collision between a Car, 11. 28an audible alert after 60 seconds: David Shepardson, “Tesla, Others Seek Ways to Ensure Drivers Keep Their Hands on the Wheel,” Reuters, last modified June 23, 2017, https://www.reuters.com/article/us-usa-autos-selfdriving-safety/tesla-others-seek-ways-to-ensure-drivers-keep-their-hands-on-the-wheel-idUSKBN19E1ZA. 29riding in the passenger seat: Telegraph Reporters, “Tesla Owner Who Turned On Car’s Autopilot Then Sat in Passenger Seat While Travelling on the M1 Banned from Driving,” The Telegraph, April 28, 2018, https://www.telegraph.co.uk/news/2018/04/28/tesla-owner-turned-cars-autopilot-sat-passenger-seat-travelling/. 29The intoxicated driver was found passed out: Doug Smith, “CHP Uses Autopilot to Stop a Tesla Model S with a Sleeping Driver at the Wheel,” Los Angeles Times, December 3, 2018, https://www.latimes.com/local/lanow/la-me-ln-tesla-driver-asleep-20181202-story.html. 29“Because of the impressive ability of Tesla’s Autopilot”: Patrick Olsen, “CR Finds That These Features Making Driving Easier but Introduce New Safety Risks,” Consumer Reports, October 4, 2018, https://www.consumerreports.org/autonomous-driving/cadillac-tops-tesla-in-automated-systems-ranking/. 29one-third of the three-hour trip looking away: Hod Lipson and Melba Kurman, Driverless: Intelligent Cars and the Road Ahead (Cambridge, MA: MIT Press, 2018), 60–61. 29“pointed at the driver’s face”: Alex Roy, “The Half-Life of Danger: The Truth behind the Tesla Model X Crash,” The Drive, April 16, 2018, http://www.thedrive.com/opinion/20082/the-half-life-of-danger-the-truth-behind-the-tesla-model-x-crash. 29after 15 seconds it disengages: Jonathan M. Gitlin, “GM Rolling Out Its Amazing Super Cruise Tech to More Cars and Brands,” Ars Technica, June 6, 2018, https://arstechnica.com/cars/2018/06/butt-kicking-super-cruise-com ing-to-all-my2020-cadillacs-more-gms-later/. 29an entire science of “crew resource management”: Federal Aviation Administration, “The History of CRM,” FAA TV, 24:16, April 5, 2012, https://www.faa.gov/tv/?mediaId=447. 30how we spend the time . . . has shifted: Chen Song and Chao Wei, “Travel Time Use over Five Decades,” Transportation Research Part A: Policy and Practice 116 (October 2018): 73–96. 30Audi’s concept car of the future: Chris Paukert, “Audi’s Long Distance Lounge Hypes a Smarter Autonomous Future,” Roadshow, CNET, June 14, 2017, https://www.cnet.com/roadshow/news/audi-long-distance-lounge-au tonomous-concept-exclusive-hands-on-video/. 30self-driving vehicles could free up 250 million hours: Roger Lanctot,Accelerating the Future: The Economic Impact of the Emerging Passenger Economy (Strategy Analytics, June 2017), 6, https://newsroom.intel.com/newsroom/wp-content/uploads/sites/11/2017/05/passenger-economy.pdf. 30$150 billion in the US alone: Securing America’s Future Energy, America’s Workforce and the Self-Driving Future: Realizing Productivity Gains and Spurring Economic Growth, June 2018, 22, https://avworkforce.secureenergy.org/wp-content/uploads/2018/06/SAFE_AV_Policy_Brief.pdf. 31asked how they expect to spend their saved time: Eva Fraedrich et al., User Perspectives on Autonomous Driving: A Use-Case-Driven Study in Germany (Berlin, Germany: DLR Institute of Transport Research, 2016), 13; Chris Tennant et al., Executive Summary, Autonomous Vehicles—Negotiating a Place on the Road (London, UK: London School of Economics, 2016), 1–10. 31“Why do all of these interior designs”: Alanis King, “Autonomous Cars Aren’t Even Here Yet and I’m Already Bored with Them,” Jalopnik, September 11, 2017, https://jalopnik.com/autonomous-cars-arent-even-here-yet-and-im-already-bore-1803756153. 31Audi has recruited Disney: Reese Counts, “We Try Audi and Disney’s New In-Car Entertainment System on the Track,” Autoblog, January 9, 2019, https://www.autoblog.com/2019/01/09/audi-disney-holoride-car-vr-entertainment/. 31Kia built a concept car: Laura Bliss, “The ‘Driverless Experience’ Looks Awfully Distracting,” CityLab, January 11, 2019, https://www.citylab.com/transportation/2019/01/self-driving-car-technology-consumer-electronics-show/580027/. 31bigger . . . than the entire auto industry today: Lanctot, Accelerating the Future, 5. 31serve up precision-targeted media: Joann Muller, “One Big Thing: What Your Car Will Know about You,” Axios, May 10, 2019, https://www.axios.com/newsletters/axios-autonomous-vehicles-7b382e7a-e9f1-466b-9c7b-33e4aadc03f4.html; sensors. . . uniquely identify your heartbeat: “Goode Intelligence Forecasts That Biometrics Market for the Connected Car Will Be Just under $1bn by 2023,” Goode Intelligence, November 13, 2017, https://www.goodeintelligence.com/wp-content/uploads/2017/11/Goode-Intelligence-Biometrics-for-the-Connected-Car_Nov17_-news_release-13112017.pdf. 32GM already tracks: Jamie LaReau, “GM Tracked Radio Listening Habits for 3 Months: Here’s Why,” Detroit Free Press, October 1, 2018, https://www.freep.com/story/money/cars/general-motors/2018/10/01/gm-radio-listening-habits-advertising/1424294002/. 32“We know how long they’ve lived”: Phoebe Wall Howard, “Data Could Be What Ford Sells Next as It Looks for New Revenue,” Detroit Free Press, November 13, 2018, https://www.freep.com/story/money/cars/2018/11/13/ford-motor-credit-data-new-revenue/1967077002/. 33odds of a crash instantly double: National Highway Traffic Safety Administration, Overview of the National Highway Traffic Safety Administration’s Driver Distraction Program, DOT HS 811 299, April 2010, https://www.nhtsa. gov/sites/nhtsa.dot.gov/files/811299.pdf. 33road crashes in the US declined: Wikipedia, s.v.

…

“automated” vehicles, 39 benefits from, 9–10, 155, 241 disengagements and, 41–42 early self-steering schemes, 5–6 electrification as symbiotic technology, 54–55 “fifth-generation” (5G) wireless grid and, 42 in-car media and interior design, 30–31 in-car surveillance and driver monitoring, 31–32 and increased importance of driving, 43–45 infrastructure and cellular grid, 40–41, 42–43 modern-day myths about future, xv–xvi predicted growth in numbers, 10, 11–12 promise of, 8–12 terminology and, 45–47 see also specific topics automation airplane “crew resource management,” 29–30 dockless systems and, 65–66, 70–71 effect on pilot training, 45 electrification as symbiotic technology, 54–55 fear of intelligent automobiles, 39, 43, 45 impact on jobs, 149–56 outdated cultural understanding of, 80–81 and scooters, 65–66, 70–71 task model for computerization of work, 150–54, 151, 155 of taxis, predicted by 2030, 10–11 urban concentration and, 186–87 see also automated vehicles autonomists disengagements and, 41–42 inevitability of full autonomy, 39, 95, 96 minimal role of government, 40 overreaches and false arguments of, 40–41, 73, 99 overview, 39–43 platooning and, 70 public transit and, 214–15, 216 self-driving trucks for freight, 122 “autonomous” vs.

pages: 342 words: 101,370

Test Gods: Virgin Galactic and the Making of a Modern Astronaut by Nicholas Schmidle

Apollo 11, bitcoin, Boeing 737 MAX, Charles Lindbergh, Colonization of Mars, crew resource management, crewed spaceflight, D. B. Cooper, Dennis Tito, Donald Trump, dual-use technology, El Camino Real, Elon Musk, game design, Jeff Bezos, low earth orbit, Neil Armstrong, no-fly zone, Norman Mailer, Oklahoma City bombing, overview effect, private spaceflight, Ralph Waldo Emerson, risk tolerance, Ronald Reagan, Scaled Composites, Silicon Valley, SpaceShipOne, Stephen Hawking, Tacoma Narrows Bridge, time dilation, trade route, twin studies, vertical integration, Virgin Galactic, X Prize

But they were really rehearsals, a chance for them to see who laughed at what jokes or how they responded to their own janky PowerPoint presentations. At least for Stucky, for whom one revelation from the crash was the risk of communication breakdown in the cockpit, how muddy language contributed to a failure of what aviators called “crew resource management,” or CRM. Stucky held Alsbury responsible above all for losing focus and unlocking the feather that day. But he wondered what Siebold was thinking when Alsbury said, “Unlocking,” and how come Siebold didn’t hear Alsbury and try to do something about it? Stucky hoped, had he been the pilot, that he would have heard Alsbury and had the wherewithal to slap Alsbury’s hand away before he could unlock the feather.

…

She was obviously disturbed, and Stucky offered to drive her back to Mojave. But she declined and said she wanted to go see the other impact points. In the car, on the way back to Denny’s, he told her, “I know you don’t want to hear that something good came of all this.” But if they learned anything from the crash it was the importance of crew resource management, of scripting every word and action during the flight. He had recently gone back to NASTAR with the other pilots and they had all committed a blunder, calling out “trimming” when they were still subsonic. He explained to Saling that if they trimmed—entering the gamma turn, going sharply nose up—while subsonic, before reaching Mach 1, it would aggravate the transonic pitch-up and could lead to a crash.

…

Cape Canaveral, Florida “captive carry” Carlos (kennel owner) Carr, David Carter, Manley (“Sonny”) Cassegrain telescope Castleberry, Tarah centrifuge training Challenger Chao, Elaine China China Lake, California Choi, Christine CIA (Central Intelligence Agency) Clancy, Tom CNBC CNN Coeur d’Alene, Idaho Colby, Luke crash of SpaceShipTwo and liquid engine and plastic-fuel motor designed by at SpaceShipTwo flight test “cold flow” test explosion Cold War Collier, Robert J. Collins, Michael Columbia compartmentalization Conroy Pat, The Great Santini Covey, Stephen Cowan, David Cramer, Jim “crew resource management” (CRM) Cronkite, Walter Cruise, Tom Crump, Jerry Darwin, Charles “dead-banding” Death Wish Coffee Desert Skywalkers Diamandis, Peter disbonding Discovery Drina River Dryden, Hugh Dryden Flight Research Center, Edwards Air Force Base Eclipse Project Edwards Air Force Base Emerson, Ralph Waldo Emirati Engle, Joe Ericson, Todd briefs board on safety issues “captive carry” flight and feud with Stucky h-stab issues and interviewed by O’Donoghue resignation of safety concerns of F-4 pilots F-18 F-18 pilots F-22 F-117 F-117 squadron FAA FAITH (Final Assembly, Integration, and Test Hangar) Falcon 9 feather Fédération Aéronautique Internationale (FAI) “figures of merit” First Man Firth, Jonathan Fisch, Ralph Fischer, Jack flaking issue flox Ford, Harrison forward air controllers (FACs) Friday Night Lights Fritsinger, Keith Future Astronaut Newsletter Gagarin, Yuri Galileo Gingrich, Newt Glenn, John “glide cone” G-LOC Goldfein, David Goldin, Daniel Goodman, Andy Goražde, Bosnia Gordon, Randy GPS satellites Grant (student) Grey Goose Griggs, David Grissom, Gus Grissom, Lowell Grundy, Bill Gulf of Tonkin Gulf War Gutierrez, Domingo gyros Hadfield, Chris Haggard, Roy Haley, Andrew Hart, Christopher Hawking, Stephen Hoey, Bob Hoffman, Abbie Holbrook, Brandi Holbrook, Bryon horizontal stabilizer (h-stab) “hot fire” Howell, Norm Hunt, Kyle Hussein, Saddam hybrid motors Idaho International Space Station International Traffic in Arms Regulations legislation (ITAR) Iraq Iraqi Air Force Iraq War Ivens, Todd JANET (Joint Air Network for Employee Transportation) Jean, Mark Jeppesen, Elrey John, Elton Jonathan (Sascha Stucky’s husband) Jones, Doug Jones, Steve Jordan, Michael Kampner, Matt Kapton Karadžić, Radovan Kármán line Kelly, Mark Kennedy, John F.

pages: 218 words: 70,323

Critical: Science and Stories From the Brink of Human Life by Matt Morgan

agricultural Revolution, Atul Gawande, biofilm, Black Swan, Checklist Manifesto, cognitive dissonance, crew resource management, Daniel Kahneman / Amos Tversky, David Strachan, discovery of penicillin, en.wikipedia.org, hygiene hypothesis, job satisfaction, John Snow's cholera map, meta-analysis, personalized medicine, publication bias, randomized controlled trial, Silicon Valley, stem cell, Steve Jobs, sugar pill, traumatic brain injury

His introduction of the World Health Organization’s ‘Surgical Safety Checklist’ has saved millions of lives by ensuring that simple critical steps, such as checking a patient’s name and allergies, are carried out for each and every operation. We have now adapted his checklists for intensive care procedures such as tracheotomies and daily ward rounds. The second strand has borrowed techniques used in industries such as aviation to allow improved team behaviour during a crisis. Crew resource management (CRM) empowers junior staff to question the decisions made by senior members of the team, flattening hierarchy and thereby improving safety. CRM can help teams come together in the fog of a disaster and work together effectively and safely. During emergencies in critical care, I now take a step backwards rather than forwards to get an overview of the situation, assign roles and act on good ideas provided by others.

…

They use language such as ‘lean management’ wrenched from the metal fist of Japan’s post-war car industry and try to apply it to a bloodied operating theatre. It can help medicine when we selectively borrow techniques from safety-conscious industries. Every day my ward round benefits from using crew-resource management theory and checklists founded in aviation. Other strategies can be helpful when trying to improve the efficiency of operating lists, allowing more knee replacement surgeries to be carried out for the same amount of effort. What these industrial standards do not tell us, however, is how critical care should be delivered at 2 a.m. during a surprisingly brutal cold snap.

Reaper Force: The Inside Story of Britain’s Drone Wars by Dr Peter Lee

crew resource management, Daniel Kahneman / Amos Tversky, digital map, illegal immigration, job satisfaction, MITM: man-in-the-middle, no-fly zone, operational security, QWERTY keyboard, Skype

Supervises Reaper crews and operations from the Squadron Operations Room BDA Battle damage assessment BUDDY LASE When two aircraft and crews work together to strike a target – one aircraft fires a laser-guided missile or bomb, while a second uses its laser-guidance system to ensure that the weapon hits the target that the laser is ‘lighting up’ CAO Casualty Assistance Officer CAOC Combined Air Operations Centre CASEVAC Casualty evacuation CAT Flying category (e.g. Combat Ready) CDE Collateral damage estimate CIVCAS Civilian casualties CNO Casualty Notification Officer CRM Crew resource management. The sharing out of responsibilities and tasks between the three crew members COMMS Communications. Commonly by radio, electronic signal or secure military internet CPL Corporal DICKING The observation of military personnel and movements by, usually, low-level, unarmed enemy called ‘dickers’ (in Afghanistan they would often be young boys).

…

He was the navigator in the back of an F-4 Phantom fighter jet on an air-to-air gunnery exercise. He and his pilot successfully shot the towed banner that was their target, which then wrapped across the front of their aircraft as they flew into it. On a UK Reaper squadron, additional layers of supervision have been added to prevent such events from happening. Crew resource management (CRM) – sharing out the responsibilities of a particular task between the three crew members – should stop everyone being fixated on just one thing. However, every so often even an experienced, intelligent, committed crew can get overly focused on a particular goal. Perhaps it is adrenaline and the fight-or-flight impulse that causes their focus to zoom in too far and prevents them from seeing the wider picture that is unfolding before them.

pages: 230 words: 71,320

Outliers by Malcolm Gladwell

affirmative action, Bill Gates: Altair 8800, Boeing 747, computer age, corporate raider, crew resource management, medical residency, old-boy network, Pearl River Delta, popular electronics, power law, Silicon Valley, Steve Ballmer, Steve Jobs, union organizing, upwardly mobile, why are manhole covers round?

If the first officer had been the captain, would he have hinted three timesNo, he would have commandedand the plane wouldn't have crashed. Planes are safer when the least experienced pilot is flying, because it means the second pilot isn't going to be afraid to speak up. Combating mitigation has become one of the great crusades in commercial aviation in the past fifteen years. Every major airline now has what is called “Crew Resource Management” training, which is designed to teach junior crew members how to communicate clearly and assertively. For example, many airlines teach a standardized procedure for copilots to challenge the pilot if he or she thinks something has gone terribly awry. (“Captain, I'm concerned about...” Then, “Captain, I'm uncomfortable with...”

pages: 242 words: 81,001

What Could Possibly Go Wrong?: The Highs and Lows of an Air Ambulance Doctor by Tony Bleetman

airport security, crew resource management, Kickstarter, low earth orbit

They were taught about the aircraft and its systems and sat exams equivalent to those required by private pilots. They also became proficient in air law, navigation, aircraft marshalling, refuelling, emergency drills, meteorology and, after a few flights, creative swearing. In essence, they became non-handling co-pilots. A big part of their training was Crew Resource Management (CRM), which encouraged effective communications and team-working in a busy cockpit. In the blag-of-all-blags, Porky even managed to get a Robinson-22 light helicopter for them to attempt to fly so they could feel the effects of controls and begin to appreciate what a helicopter pilot has to do.

pages: 415 words: 123,373

Inviting Disaster by James R. Chiles

air gap, Airbus A320, airline deregulation, Alignment Problem, Apollo 11, Apollo 13, Boeing 747, crew resource management, cuban missile crisis, Exxon Valdez, flying shuttle, Gene Kranz, Maui Hawaii, megaproject, Milgram experiment, Neil Armstrong, North Sea oil, Piper Alpha, Recombinant DNA, Richard Feynman, Richard Feynman: Challenger O-ring, risk tolerance, Ted Sorensen, time dilation

It had indeed been working, but the response to emergency controls was so slow he hadn’t realized it. And after landing, the copilot had realized before McCormick had that a change in the thrust reverser setting would steer the airplane away from the fire station it had been heading for. A good system, and operators with good “crew resource management” skills, can tolerate mistakes and malfunctions amazingly well. Some call it luck, but it’s really a matter of resilience and redundancy. We know from cockpit records that surprisingly small problems can make for fatal distractions if this resiliency factor is absent. Early proof of its importance came on December 29, 1972, during the flight of Eastern Airlines Flight 401.